SecureScan

Self-hosted security-scanning orchestrator: 14 scanners across code, dependencies, IaC, containers, secrets, DAST, and network targets, normalized into a single deterministic finding stream — fronted by a CLI, a GitHub Action, and a web dashboard.

This is the operator + developer reference for SecureScan v0.11.0. If you have not used SecureScan before, Quick start: your first scan is the place to begin.

What you can do with SecureScan

- Run diff-aware scans on every PR. The

Metbcy/securescan@v1GitHub Action wrapssecurescan diff, posts a single upserted PR comment of NEW findings, and uploads SARIF to GitHub's Security tab. See GitHub Action. - Triage findings across rescans. Each finding has a stable

fingerprint, so verdicts (

false_positive,accepted_risk,fixed, …) and per-finding comments surviveDELETE /scans/{id}and reappear on every later scan of the same target. See Triage workflow. - Watch scans in real time. The dashboard's scan-detail page streams live per-scanner progress over Server-Sent Events. See Real-time scan progress.

- Fan out events to your tools. Outbound webhooks deliver

HMAC-signed

scan.complete/scan.failed/scanner.failedevents to Slack, Discord, or any HTTP receiver, with a durable retry queue. See Webhooks. - Issue scoped, hashed API keys. v0.8.0 replaces the single shared

env-var key with DB-backed keys carrying explicit

read/write/adminscopes per route. See API keys.

Audience

This documentation is for three readers, in roughly that order:

| Reader | What they need | Start here |

|---|---|---|

| Operator | Install, configure, harden, deploy, and maintain SecureScan in their org. Health probes, env vars, signed artifacts. | Install → Production checklist |

| Developer | Talk to the API. Ship a PR scan. Verify webhook signatures. | API overview → Webhook payloads |

| Security team | Understand what SecureScan covers, what it deliberately does not, and how findings are scored / suppressed / triaged. | Scan types → Supported scanners |

What this is not

SecureScan is intentionally not a SaaS, not an SBOM database, and not a vulnerability database in its own right. It orchestrates the open-source scanners you already trust (Semgrep, Bandit, Trivy, Checkov, ZAP, nmap, …), normalizes their output into a single shape, and adds diff-awareness, signed artifacts, and a deterministic serialization contract on top. See Architecture overview for the full picture.

How does this compare?

If you're evaluating SecureScan against tools you already use or are also considering, the Compare section has factual side-by-side write-ups:

- vs DefectDojo — different problem (vuln management hub vs PR-loop scanner); many teams use both.

- vs Trivy — SecureScan wraps Trivy and adds 13 more scanners plus a diff-aware PR loop.

- vs Snyk — OSS, self-hosted, deterministic vs SaaS with reachability analysis.

Project links

- Source: github.com/Metbcy/securescan

- Container image:

ghcr.io/metbcy/securescan - Wheel + sdist + sigstore bundles: attached to every GitHub Release

- Changelog: reference/changelog

- Release process: reference/release-process

This site documents the stable public API surface and the

operational behavior. For the full request/response schema of every

endpoint — including the schemas you will not find here — point your

browser at the running server's /docs (FastAPI Swagger UI) or

/redoc. See API endpoints for the entry point.

Install

SecureScan ships three install paths. Pick the one that matches how you intend to run it, not necessarily where it ends up.

1. Container (recommended for production)

The image is multi-arch (amd64 + arm64), comes with all 14 scanners pre-installed at pinned versions, and is what the GitHub Action falls back to when wheel-mode prerequisites are not met.

docker pull ghcr.io/metbcy/securescan:v0.11.0

docker run --rm -v "$PWD:/work" -w /work \

ghcr.io/metbcy/securescan:v0.11.0 \

diff . --base-ref origin/main --head-ref HEAD --output github-pr-comment

To run the dashboard backend:

docker run --rm -p 8000:8000 \

-e SECURESCAN_API_KEY="$(openssl rand -hex 32)" \

ghcr.io/metbcy/securescan:v0.11.0 \

serve --host 0.0.0.0 --port 8000

Production deployments must verify the image signature with

cosign before running. See

Verifying signed artifacts.

The image follows the release schedule documented in

Release process. All tags from

v0.2.0 onward are signed.

2. Wheel from PyPI

pip install securescan # latest stable

pip install securescan==0.11.0 # exact pin

# Or, isolated, via pipx:

pipx install securescan

PDF reports (securescan scan ... --output report-pdf) require the

optional [pdf] extra, which pulls in WeasyPrint and its Cairo /

Pango / GObject system-library chain:

pip install 'securescan[pdf]'

The container image ships weasyprint pre-installed, so PDF reports

work out of the box there. Without the extra, requesting

--output report-pdf raises a clear RuntimeError pointing back at

this install step.

The wheel only ships SecureScan itself. The underlying scanner CLIs

(semgrep, bandit, safety, pip-licenses, checkov, trivy,

npm, nmap, ZAP, …) need to be installed separately and on PATH

for the scanners that wrap them to run. Use securescan status to

see which ones are detected:

$ securescan status

Scanner Type Available Version

semgrep code yes 1.71.0

bandit code yes 1.7.5

trivy dependency yes 0.49.1

checkov iac no (run: pip install checkov)

zap dast no (run: brew install zaproxy)

nmap network yes 7.94

...

If you do not want to manage scanner installs yourself, use the container instead.

Verify the wheel signature

Every tagged release is signed with sigstore-python. To verify the wheel:

RELEASE=v0.11.0

gh release download $RELEASE -R Metbcy/securescan \

-p 'securescan-*.whl' -p 'securescan-*.whl.sigstore.json'

pip install sigstore

sigstore verify identity \

--cert-identity "https://github.com/Metbcy/securescan/.github/workflows/release.yml@refs/tags/${RELEASE}" \

--cert-oidc-issuer 'https://token.actions.githubusercontent.com' \

--bundle securescan-${RELEASE#v}-py3-none-any.whl.sigstore.json \

securescan-${RELEASE#v}-py3-none-any.whl

The *.sigstore.json bundles ship as GitHub Release assets. PyPI

itself does not host them.

3. GitHub Action (CI/CD)

The composite action wraps securescan diff, posts the upserted PR

comment, and uploads SARIF. It tries the wheel first and falls back

to the pinned container image when scanner binaries are not on

PATH.

# .github/workflows/securescan.yml

on: pull_request

permissions:

contents: read

pull-requests: write # required for the upserted PR comment

security-events: write # required for SARIF upload

jobs:

securescan:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0 # diff needs both base and head commits

- uses: Metbcy/securescan@v1

with:

scan-types: code,dependency

fail-on-severity: high

See GitHub Action for the full input reference, inline-review mode, and permission requirements.

From source (development)

Only needed if you are contributing to SecureScan itself.

git clone https://github.com/Metbcy/securescan

cd securescan/backend

python3 -m venv venv && source venv/bin/activate

pip install -e .

pip install semgrep bandit safety pip-licenses checkov # plus any others you want

securescan serve --host 127.0.0.1 --port 8000

In a second shell:

cd securescan/frontend

npm install

npm run dev # http://localhost:3000

See Contributing for the test/lint/release loop.

What gets installed where

| Path | Contents |

|---|---|

securescan (binary on PATH) | Python entry point. Routes to serve, scan, diff, compare, … |

~/.config/securescan/.env | Optional persisted env vars (ZAP creds, etc). Local config. |

SQLite DB (default ~/.securescan/scans.db) | Scans, findings, triage state, API keys, webhooks, deliveries. |

/tmp/securescan-backend.log (when serving) | Structured scan-lifecycle log lines. |

The dashboard frontend is a separate Next.js app. The container ships

only the backend; deploy the frontend independently or use

docker compose up from the repo root for an all-in-one local stack.

Next

- Quick start: your first scan — end-to-end walkthrough.

- Production checklist — when you go past

localhost.

Quick start: your first scan

This walks through running SecureScan against the

~/Documents/securescan repo itself — backend + frontend up, an

end-to-end scan, and reading the result on the dashboard.

It assumes you have:

- Python 3.12+

- Node.js 20+

- The repo cloned at

~/Documents/securescan

1. Bring up the backend

cd ~/Documents/securescan/backend

python3 -m venv venv && source venv/bin/activate

pip install -e .

pip install semgrep bandit safety pip-licenses checkov

securescan serve --host 127.0.0.1 --port 8000

You should see something like:

INFO SECURESCAN_API_KEY not set; API is unauthenticated (dev mode).

INFO Started server process [12345]

INFO Waiting for application startup.

INFO Application startup complete.

INFO Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)

Confirm liveness and readiness:

$ curl -s http://127.0.0.1:8000/health

{"status":"ok"}

$ curl -s http://127.0.0.1:8000/ready | jq .

{

"status": "ready",

"checks": {

"database": "ok",

"scanner_registry": "ok"

}

}

Dev mode means no authentication required. The startup banner

warns you. For anything past localhost, set SECURESCAN_API_KEY

or create DB-backed keys — see API keys and

Production checklist.

2. Bring up the frontend

In a second shell:

cd ~/Documents/securescan/frontend

npm install

npm run dev

Open http://localhost:3000. The topbar API

status indicator should be green: the dashboard is talking to the

backend at http://localhost:8000.

3. Kick off a scan via the API

You can use the dashboard, but the API path is the easiest to show

in a guide. Request a code + dependency scan of the SecureScan

repo itself:

curl -s -X POST http://127.0.0.1:8000/api/v1/scans \

-H 'Content-Type: application/json' \

-d '{

"target_path": "/home/you/Documents/securescan",

"scan_types": ["code", "dependency"]

}' | jq .

Response:

{

"id": "0f1a93cb-44c2-4c8e-9f92-0a7c5a2e1b51",

"target_path": "/home/you/Documents/securescan",

"scan_types": ["code", "dependency"],

"status": "pending",

"started_at": "2026-04-29T20:11:05.123456",

"completed_at": null,

"scanners_run": [],

"scanners_skipped": []

}

The backend immediately starts running the requested scanners as a

background asyncio task. Save the id — call it $SCAN_ID.

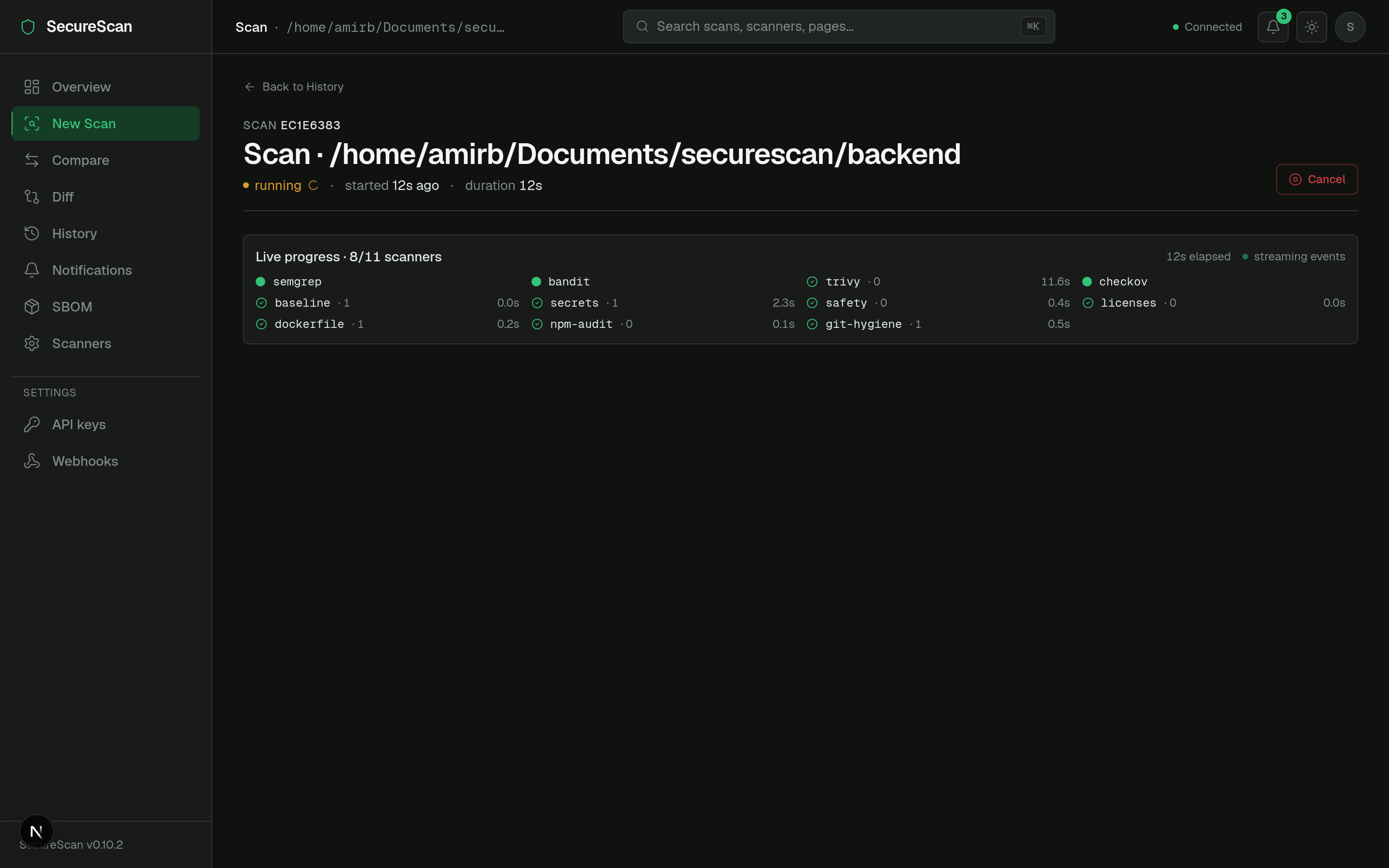

4. Watch progress live

Open the dashboard at

http://localhost:3000/scan/<SCAN_ID>. Above the StatLine you will

see <ScanProgressPanel> with one row per scanner, each going

queued → running → complete as the orchestrator drives them. This is

the v0.7.0 SSE stream; on the wire it looks like:

curl -N "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/events"

event: scan.start

data: {"scan_types":["code","dependency"]}

event: scanner.start

data: {"name":"semgrep"}

event: scanner.complete

data: {"name":"semgrep","duration_s":4.31,"findings_count":7}

event: scanner.start

data: {"name":"bandit"}

...

event: scan.complete

data: {"findings_count":12,"risk_score":34.2}

In an authenticated deployment, browsers cannot send X-API-Key on an

EventSource. The dashboard exchanges the API key for a short-lived

signed event token via POST /api/v1/scans/{id}/event-token.

See SSE event tokens.

5. Read the results

Once status flips to completed, the scan-detail page shows:

- A PageHeader with the target path, scan id, and total finding count.

- A StatLine with risk score, severity counts, scanners run, and total duration.

- A scanner-chip strip showing which scanners ran, which were skipped, and the install hint for skipped ones.

- A findings table with columns:

Column What it shows Severity critical/high/medium/low/infowith a colored dot prefix.Title One-line finding summary from the scanner. File:line Mono-spaced; click to expand the row for the matched line and AI explanation. Rule Scanner-specific rule id ( B106,python.lang.security.audit.eval-detected).Scanner Origin scanner ( semgrep,bandit,trivy, …).Compliance Tag chips: OWASP-A03,PCI-DSS-6.5.1,SOC2-CC7.1, etc. (Compliance)Status Triage verdict pill — new(default),triaged,false_positive,accepted_risk,fixed,wont_fix. (Triage)

The same data is available over the API:

curl -s "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/findings" | jq '.[0]'

{

"id": "f-2c1...",

"scanner": "semgrep",

"scan_type": "code",

"severity": "high",

"title": "Use of eval()",

"description": "...",

"file_path": "backend/securescan/cli.py",

"line": 142,

"rule_id": "python.lang.security.audit.eval-detected",

"fingerprint": "9d2f...",

"compliance_tags": ["OWASP-A03"],

"state": null,

"metadata": { "suppressed_by": null }

}

state is null until you set a triage verdict — see

Triage workflow.

6. Triage one finding

Suppose row 1 is a false positive in test code. Set the verdict:

FP=$(curl -s "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/findings" \

| jq -r '.[0].fingerprint')

curl -s -X PATCH \

"http://127.0.0.1:8000/api/v1/findings/$FP/state" \

-H 'Content-Type: application/json' \

-d '{"status":"false_positive","note":"intentional in test fixture","updated_by":"alice"}'

Response:

{

"fingerprint": "9d2f...",

"status": "false_positive",

"note": "intentional in test fixture",

"updated_at": "2026-04-29T20:14:22.000000",

"updated_by": "alice"

}

The default findings filter hides false_positive (along with

accepted_risk and wont_fix); the row disappears on next reload.

This verdict survives every later scan of the same target —

fingerprints are cross-scan stable.

7. Clean up

curl -X DELETE "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID"

# 204 — scan + findings rows cascade-deleted.

# Triage verdicts persist (keyed on fingerprint, not scan id).

What you just touched

- The scan engine — see How scans work.

- The API — see API overview.

- The SSE stream — see Real-time scan progress.

- The triage workflow — see Triage.

Where to next

- Run a CI scan: GitHub Action.

- Deploy past

localhost: Production checklist. - Hook another tool to scan completion: Webhooks.

Architecture overview

SecureScan is three pieces that talk over a stable JSON API:

- A FastAPI backend that schedules scanner subprocesses, persists findings to SQLite, and exposes the REST API.

- A Next.js dashboard that consumes that API.

- A CLI / GitHub Action that runs scans either directly or against a backend over HTTP.

Everything centers on the finding: a normalized record produced by one of the 14 scanners, deduplicated across scanners, fingerprinted for cross-scan identity, and serialized deterministically.

Component diagram

flowchart LR

subgraph Client[Client surfaces]

CLI[securescan CLI]

Dash[Next.js dashboard]

Hook[Outbound HTTP receiver]

GHA[GitHub Action]

end

subgraph Server[FastAPI backend single uvicorn worker]

Auth[auth.py + api_keys.py]

Scans[/api/v1/scans/]

Triage[/api/v1/findings/state/]

Hooks[/api/v1/webhooks/]

Notify[/api/v1/notifications/]

Bus[(in-process event bus)]

Disp[Webhook dispatcher async task]

Pipe[Scanner orchestrator pipeline.py]

end

DB[(SQLite scans.db)]

Scanners[14 scanner subprocesses]

Dash <--> Scans

CLI --> Scans

GHA --> Scans

Scans --> Auth

Scans --> Pipe

Pipe --> Scanners

Pipe --> Bus

Bus --> Dash

Bus --> Notify

Bus --> Disp

Disp --> Hook

Scans --> DB

Triage --> DB

Hooks --> DB

Notify --> DB

Disp --> DB

Scan lifecycle

POST /api/v1/scans returns immediately with a pending scan row.

The scanners run as a background asyncio task on the same uvicorn

worker; the request handler does not block on them.

sequenceDiagram

autonumber

participant C as Client

participant API as FastAPI handler

participant DB as SQLite

participant O as Orchestrator (_run_scan)

participant S as Scanner subprocess

participant Bus as Event bus

C->>API: POST /api/v1/scans

API->>DB: INSERT scans (status=pending)

API-->>C: 200 {id, status: pending}

Note over API,O: asyncio.create_task(_run_scan(id))

O->>Bus: publish scan.start

loop for each requested scanner

O->>Bus: publish scanner.start

O->>S: spawn subprocess (semgrep / trivy / ...)

S-->>O: stdout JSON / SARIF

O->>Bus: publish scanner.complete (duration_s, findings_count)

end

O->>DB: UPDATE scans (status=completed) + INSERT findings

O->>Bus: publish scan.complete

Bus->>Bus: side-effect: enqueue webhook delivery

Bus->>Bus: side-effect: insert in-app notification

Every event in this flow is published to the in-process event bus, which fans out to:

- The dashboard's SSE subscribers (live progress).

- The outbound webhook dispatcher (webhook_dispatcher.py).

- The notifications table (bell-icon in-app feed).

Data model

erDiagram

scans ||--o{ findings : has

scans ||--o{ scanner_skips : has

finding_states ||--o{ finding_comments : has

webhooks ||--o{ webhook_deliveries : has

api_keys ||--|| principals : authenticates

notifications

scans {

text id PK

text target_path

text scan_types

text status

timestamp started_at

timestamp completed_at

}

findings {

text id PK

text scan_id FK

text fingerprint

text scanner

text severity

text rule_id

text file_path

int line

text metadata_json

}

finding_states {

text fingerprint PK

text status

text note

timestamp updated_at

}

finding_comments {

text id PK

text fingerprint FK

text text

}

api_keys {

text id PK

text name

text prefix

text key_hash

text scopes_json

timestamp created_at

timestamp last_used_at

timestamp revoked_at

}

webhooks {

text id PK

text url

text secret

text event_filter_json

boolean enabled

}

webhook_deliveries {

text id PK

text webhook_id FK

text event

text payload_json

text status

int attempt

timestamp next_attempt_at

}

notifications {

text id PK

text severity

text title

text body

timestamp created_at

timestamp read_at

}

The cross-cutting identity in this schema is the fingerprint: a

SHA-256 over (scanner, rule_id, file_path, normalized_line_context, cwe)

that stays stable across scans of the same target. Triage state and

comments are keyed on the fingerprint, not the scan id, which is why

deleting a scan does not lose the verdict — see

Triage workflow for the full story.

Authentication topology

flowchart LR Req[Incoming request] --> Mw[require_api_key] Mw -->|X-API-Key matches env| Env[env Principal: all scopes] Mw -->|ssk_* matches DB row| DB[DB Principal: row.scopes] Mw -->|?event_token=...| Tok[Validate HMAC + rehydrate Principal] Mw -->|none + AUTH_REQUIRED=0| Dev[None: dev mode passthrough] Mw -->|none + AUTH_REQUIRED=1| Block[401] Env --> Scope[require_scope] DB --> Scope Tok --> Scope Dev --> Scope Scope -->|scope OK| Handler[Route handler] Scope -->|missing scope| Forbid[403]

See Authentication overview for the full path

through auth.py, including event-token auth on the SSE route.

Process boundaries

| Process | Responsibility | Restart safe? |

|---|---|---|

uvicorn worker (single) | Serve API, run orchestrator, dispatch webhooks, drive event bus. | Yes — pending webhook deliveries resume from DB. |

| Scanner subprocesses | One per scanner per scan, spawned by the orchestrator. | Killed when scan is cancelled (POST /cancel). |

| Frontend (Next.js) | Pure read/write client of the API. Stateless. | Yes — page reload re-subscribes the SSE stream. |

The event bus and the webhook dispatcher are both in-process

singletons. Run uvicorn with --workers 1. To scale horizontally

today, run multiple separate single-worker instances behind a

sticky-session load balancer keyed on scan_id. Multi-process pubsub

(Redis backplane) is on the roadmap.

See Single-worker constraint.

Determinism contract

For the diff-aware PR comment and the SARIF Security-tab dedup to work, the renderer must produce byte-identical output for the same inputs. SecureScan enforces this by:

- Sorting findings by a canonical key

(severity_rank desc, scanner, rule_id, file_path, line, title). - Excluding wall-clock timestamps from byte-identity-sensitive

sections.

SECURESCAN_FAKE_NOWpins the only time-derived field. - Deduplicating + ordering rule lists in SARIF.

- Computing each finding's

partialFingerprints.primaryLocationLineHashfrom the stable per-finding fingerprint. - Auto-disabling AI enrichment when

CI=true(it is non-deterministic).

Without these properties, every PR push would post a new PR comment instead of upserting the existing one, and SARIF re-uploads would look like a wave of new alerts. See Findings & severity for the fingerprint construction.

Source layout

| Directory | Contents |

|---|---|

backend/securescan/api/ | FastAPI routers (scans, triage, webhooks, notifications, keys, …). |

backend/securescan/scanners/ | One module per scanner; all subclass BaseScanner. |

backend/securescan/auth.py | Principal, require_api_key, require_scope. |

backend/securescan/api_keys.py | Key generation + salted-SHA-256 hashing. |

backend/securescan/event_tokens.py | SSE event-token mint/verify. |

backend/securescan/webhook_dispatcher.py | Durable webhook delivery worker. |

backend/securescan/pipeline.py | The scan orchestrator (_run_scan lives in api/scans.py). |

backend/securescan/fingerprint.py | Cross-scan finding identity. |

backend/securescan/dedup.py | Cross-scanner deduplication. |

backend/securescan/scoring.py | Risk-score formula (severity rank × scanner confidence). |

frontend/src/app/ | Next.js app router pages — see Dashboard tour. |

action/ | The composite GitHub Action. |

Next

- Run a scan from scratch: Quick start.

- Operate it past

localhost: Production checklist. - Wire it into CI: GitHub Action.

How scans work

A scan is an orchestrated sweep of one or more scanner subprocesses

against a target — almost always a directory in the local filesystem,

sometimes a URL (DAST) or hostname (network). The result is a row in

the scans table plus N rows in findings, normalized into a

single shape regardless of which scanner produced them.

Lifecycle

stateDiagram-v2

[*] --> pending : POST /api/v1/scans

pending --> running : orchestrator picks it up

running --> completed : every scanner returned (or skipped)

running --> failed : orchestrator raised before terminal

running --> cancelled : POST /api/v1/scans/{id}/cancel

completed --> [*]

failed --> [*]

cancelled --> [*]

Every transition emits a structured log line on the

securescan.scan logger AND publishes to the in-process event bus

(Real-time scan progress). On the bus,

each scanner has its own sub-lifecycle:

scan.start

scanner.start # one per scanner that will run

scanner.complete # OR

scanner.skipped # tool not on PATH; payload includes install_hint

scanner.failed # tool crashed; error truncated to 200 chars

scan.complete # OR

scan.failed # OR

scan.cancelled

Source: see _log_scan_event in

backend/securescan/api/scans.py.

What runs, in what order

POST /api/v1/scans accepts:

{

"target_path": "/abs/path/to/repo",

"scan_types": ["code", "dependency"],

"target_url": null,

"target_host": null

}

The orchestrator looks up scanners by scan_type

(see Supported scanners) and runs them

in registry order. Each scanner is a Python class with an async run()

that shells out to the underlying tool, parses stdout, and returns a

list of Finding objects.

scanners_run and scanners_skipped are persisted on the scan row,

so a 404 for a scanner whose binary is not installed never silently

disappears — the dashboard shows it under "Skipped (N)" with the

install hint surfaced from the scanner's install_hint property.

Cancellation

curl -X POST -H "X-API-Key: $K" \

http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/cancel

- Returns 200 with

status: cancelledif the scan wasrunningorpending. - Returns 409 if the scan is already

completed/failed/cancelled. - Returns 404 for an unknown id.

The orchestrator's asyncio task is cancelled, which propagates

CancelledError into the currently-running subprocess wrapper and

asks it to terminate.

Deletion

curl -X DELETE -H "X-API-Key: $K" \

http://127.0.0.1:8000/api/v1/scans/$SCAN_ID

- Returns 204 on success — findings are cascade-deleted.

- Returns 409 if the scan is

runningorpending(cancel first). - Returns 404 for an unknown id.

Deleting a scan does not delete triage verdicts (finding_states)

or per-finding comments. Those are keyed on the cross-scan

fingerprint, so they reactivate when a matching finding reappears in

a later scan. Cleared on purpose: a "false positive" verdict outlives

the scan that produced the original finding. See

Triage workflow.

Determinism

Two scans of the same git ref produce the same findings, in the same order, with the same fingerprints. This is foundational — without it, the v0.2.0 PR-comment upsert would behave like "post a new comment every push", and SARIF re-uploads to GitHub's Security tab would look like a flood of new alerts.

The relevant guarantees are:

- Findings are sorted canonically:

severity_rank desc, scanner, rule_id, file_path, line, title. - AI enrichment is auto-disabled when

CI=trueis set (it is non-deterministic). Pass--aito override. - Wall-clock timestamps are excluded from byte-identity-sensitive

payload sections.

SECURESCAN_FAKE_NOWpins the one time-derived field that exists. - SARIF rule lists are deduplicated and ordered.

See Findings & severity for the fingerprint construction and Architecture overview for the end-to-end determinism contract.

CLI mode (no backend)

securescan scan ./your-repo runs the same orchestrator without

involving the backend or DB. The output goes to stdout in whatever

format you ask for:

securescan scan ./your-repo \

--type code --type dependency \

--output sarif --output-file results.sarif

securescan scan ./your-repo \

--type code \

--output json --output-file findings.json

CLI mode is what securescan diff uses internally — it scans both

sides of the diff and classifies findings into NEW / FIXED /

UNCHANGED. See CLI commands.

Failure modes

| Symptom | Likely cause | Where to look |

|---|---|---|

Scan stuck in running past the longest scanner | A subprocess hung; the orchestrator awaits its asyncio future. | tail /tmp/securescan-backend.log; cancel the scan; check nmap/ZAP |

All scanners come back as skipped | None of the underlying CLIs are on PATH. | securescan status |

scan.failed with error: ... | Orchestrator-level exception (DB write failed, target invalid) | Backend log securescan.scan logger. |

Specific scanner repeatedly scanner.failed | Tool present but crashed on input. error field has details. | Re-run the tool by hand on the same target. |

tail -f /tmp/securescan-backend.log | grep securescan.scan shows

the scan-lifecycle line for every event. The same data is on the

SSE stream at /api/v1/scans/{id}/events — use whichever fits your

debugging surface.

Next

- Scan types — what each

scan_typecovers. - Supported scanners — what each tool finds.

- Findings & severity — the finding shape.

- Suppression — three ways to silence a finding.

Scan types

A scan_type selects which family of scanners runs. The orchestrator

expands ["code", "dependency"] into the union of every scanner whose

scan_type matches.

You can pass any subset; if none are passed the CLI defaults to code

for fast PR feedback. There are six families.

Type table

| Type | Default for securescan diff | Scanners | Typical target |

|---|---|---|---|

code | ✅ yes | semgrep, bandit, secrets, git-hygiene | Source tree |

dependency | trivy, safety, npm-audit, licenses | Source tree (manifests) | |

iac | checkov, dockerfile | Source tree | |

baseline | baseline (built-in) | Host or filesystem | |

dast | builtin_dast, zap | URL | |

network | nmap | Hostname / IP / range |

The mapping lives in

backend/securescan/scanners/__init__.py

(ALL_SCANNERS registry) and each scanner's scan_type class

attribute.

code

Static analysis of source files in the target tree. Picks up:

- SAST issues (SQL injection, XSS, command injection, path

traversal) via Semgrep with

--config autoplus any custom rule packs you declare in.securescan.yml. - Python-specific insecure imports and bandit's signatures.

- Secrets (hardcoded API keys, tokens, private keys) via the built-in regex bank and Gitleaks.

- Git hygiene — sensitive files committed to the repo,

missing

.gitignoreentries.

Example:

securescan scan ./your-repo --type code --output text

[HIGH] semgrep backend/api.py:42 Use of eval()

[HIGH] bandit backend/db.py:12 SQL injection via str.format

[MEDIUM] secrets config/local.yml:5 AWS access key

The same call via the API:

curl -X POST http://127.0.0.1:8000/api/v1/scans \

-H 'Content-Type: application/json' \

-H "X-API-Key: $K" \

-d '{"target_path":"/abs/path/to/repo","scan_types":["code"]}'

dependency

Manifest + lockfile vulnerability scanning:

trivy— handlesrequirements.txt,package.json,Gemfile.lock,Cargo.lock,go.sum,composer.lock,Pipfile.lock, etc.safety— Python dependencies against the safety DB.npm-audit— npm advisories on transitive deps.licenses— copyleft / unknown-license risks viapip-licenses.

Example:

securescan scan ./node-project --type dependency --output sarif \

--output-file deps.sarif

The licenses scanner reports compliance findings (unknown / GPL /

AGPL detected), not CVEs. It is part of the dependency family

because the data source is the manifest. Filter it out with

.securescan.yml's ignored_rules if your org has explicit

copyleft approval.

iac

Infrastructure-as-code misconfigurations:

checkov— Terraform, Kubernetes manifests, Helm charts, CloudFormation, Dockerfiles. Hundreds of policies out of the box.dockerfile— opinionated checks for:latestbase images, running as root,curl | shpatterns, secrets inENV.

securescan scan ./infra --type iac --output text

The dockerfile scanner is fast and runs even when checkov is not installed; checkov is the heavyweight, broader source.

baseline

Host-config audit: SSH daemon settings, /etc/passwd /

/etc/shadow perms, ~/.ssh perms, kernel parameters, password

policy.

The behavior depends on target_path:

target_path = "/"— host-wide probes (the default behavior).- Anything else — probes

<target>/etc/ssh/sshd_config,<target>/etc/passwd,<target>/etc/shadow. Skips host-only checks like~/.sshperms. If none of those files are present, emits one info-severity finding pointing at--baseline-host-probes.

# Audit the running host (requires read access to /etc/...)

securescan scan / --type baseline

# Audit a chrooted filesystem

securescan scan /mnt/snapshot --type baseline

# Force host-scope probes alongside a target scan

securescan scan ./my-config --type code --baseline-host-probes

Every baseline finding gets a metadata.baseline_scope tag of

host or target so the audit trail records which mode produced

the finding.

dast

Dynamic application security testing — runs against a live URL:

builtin_dast— header / cookie / info-disclosure checks. No external dependency. Fast.zap— full ZAP active+passive scan. Requires a running ZAP daemon atSECURESCAN_ZAP_ADDRESS.

securescan scan https://staging.example.com \

--type dast \

--output text

For the ZAP scanner, set credentials in

~/.config/securescan/.env:

SECURESCAN_ZAP_ADDRESS=http://127.0.0.1:8090

SECURESCAN_ZAP_API_KEY=your-key

Only run DAST against systems you own or have explicit authorization

to test. ZAP active mode is intrusive. The default securescan diff

in CI does not include dast — you have to opt in with

--type dast (or scan-types: code,dast on the GitHub Action).

network

Network-perimeter probe via nmap. Reports open ports, detected

service banners, and a coarse risk classification (telnet, RDP, SMB,

exposed databases, etc.).

securescan scan 10.0.0.1 --type network --output text

Or a CIDR / hostname:

securescan scan example.com --type network

securescan scan 10.0.0.0/24 --type network --output sarif --output-file net.sarif

Combining types

Comma-separated list — all are unioned together:

securescan scan ./your-repo --type code --type dependency --type iac

Or in .securescan.yml:

scan_types:

- code

- dependency

- iac

The PR-mode default is scan-types: code because it produces fast

feedback on every push. Adding dependency is the most common

upgrade for a busy repo.

Picking what to run

- Scanning a PR diff?

code(default) — adds dependency / iac as your team adopts them. - Scanning a release tag before publishing?

code,dependency,iac. - Auditing a production host?

baselineagainst/. - Verifying a deployed service?

dastagainst the URL. - Surveying a subnet?

network(with authorization).

Next

- Supported scanners — what each tool produces.

- Suppression — silencing rules across types.

- Compliance — how findings map to OWASP / SOC 2 / PCI-DSS.

Supported scanners

SecureScan ships 14 scanners. Each is a Python class that subclasses

BaseScanner and shells out to the underlying tool. The registry is

backend/securescan/scanners/__init__.py;

each module is named after the scanner.

Registry

| Scanner | Module | scan_type | What it finds |

|---|---|---|---|

| semgrep | scanners/semgrep.py | code | SQLi, XSS, command injection, hardcoded secrets via Semgrep's rule library. |

| bandit | scanners/bandit.py | code | Python-specific security issues, insecure imports. |

| secrets | scanners/secrets.py | code | Hardcoded credentials, API keys, tokens, private keys. |

| git-hygiene | scanners/gitleaks.py | code | Sensitive files committed to repo, gitleaks rules, missing .gitignore protections. |

| trivy | scanners/trivy.py | dependency | Known CVEs in package manifests and lockfiles across many ecosystems. |

| safety | scanners/safety.py | dependency | Python dependency vulnerabilities from the safety DB. |

| npm-audit | scanners/npm_audit.py | dependency | npm package advisories and transitive vulns. |

| licenses | scanners/license_checker.py | dependency | Copyleft / unknown / restricted license findings via pip-licenses and the license field of npm packages. |

| checkov | scanners/checkov.py | iac | Terraform, Kubernetes, Helm, CloudFormation, Dockerfile misconfigurations. |

| dockerfile | scanners/dockerfile.py | iac | Insecure Docker patterns: :latest, root user, curl | sh, secrets in ENV. |

| baseline | scanners/baseline.py | baseline | SSH config, /etc/passwd perms, password policy, kernel params. |

| builtin_dast | scanners/dast_builtin.py | dast | Missing security headers, info disclosure, insecure cookie flags. No external dep. |

| zap | scanners/zap_scanner.py | dast | OWASP ZAP active + passive scan against a URL. |

| nmap | scanners/nmap_scanner.py | network | Open ports, service detection, risk classification. |

To see what is installed and reachable on the current host:

$ securescan status

Scanner Type Available Version Notes

semgrep code yes 1.71.0

bandit code yes 1.7.5

trivy dependency yes 0.49.1

safety dependency yes 2.3.5

checkov iac no pip install checkov

npm-audit dependency yes npm 10.x uses ambient `npm` on PATH

zap dast no /usr/share/zaproxy/zap.sh; recommended port 8090

nmap network yes 7.94

...

The same data is at GET /api/v1/dashboard/status — the dashboard's

/scan page reads it on mount and disables categories whose scanners

are all unavailable. See Dashboard tour.

Per-scanner notes

Semgrep

-

Uses

--config autoby default. To override, setsemgrep_rulesin.securescan.yml:semgrep_rules: - .securescan/rules/secrets.yml - .securescan/rules/unsafe-deserialization.ymlWhen set, replaces

--config autowith one--config <path>per entry. Paths are relative to the config file. -

Rule IDs surface as

python.lang.security.audit.eval-detectedetc. Use them inseverity_overrides:andignored_rules:to tune.

Bandit

- Runs against Python files only. Rule IDs are

B<NNN>(e.g.B106= hardcoded password). - One bandit gotcha: it scans

__init__.pyand test files too. Use inline# securescan: ignore B106on test fixtures to silence intentional-by-design findings.

Trivy

- The heavyweight dependency scanner. Picks up most ecosystems out of

the box (

requirements.txt,package-lock.json,Cargo.lock,go.sum,composer.lock,Pipfile.lock). - Updates its DB on first run; allow ~30s extra latency on a cold cache.

ZAP

-

Requires a separately running ZAP daemon. The scanner connects to the daemon's HTTP API.

# ~/.config/securescan/.env SECURESCAN_ZAP_ADDRESS=http://127.0.0.1:8090 SECURESCAN_ZAP_API_KEY=your-key -

The Arch Linux launcher is auto-detected at

/usr/share/zaproxy/zap.sh. The scanner'sinstall_hintrecommends port8090because8080is commonly busy.

nmap

- Default scan is non-intrusive (TCP connect, top 1000 ports, service banners). Risk classification flags exposed databases (3306, 5432, 6379, 27017), unencrypted protocols (telnet 23, FTP 21), and SMB (445).

nmap is not passive. Only run it against networks you own or have explicit written authorization to scan. SecureScan does not enforce scope authorization — that is your responsibility.

baseline

- The only built-in scanner (no external CLI). Implements every probe directly in Python.

- Probes are categorised as

hostortargetscope; see Scan types for howtarget_pathselects between them. - Surfaces

metadata.baseline_scope = "host" | "target"on every finding for audit trail.

Adding scanners

Adding a new scanner means dropping a new module under

backend/securescan/scanners/ that subclasses BaseScanner and

appending an instance to ALL_SCANNERS. The base class handles:

- Subprocess spawn + cancellation.

- Stdout / stderr capture.

install_hint+ availability detection.- Wrapping returned dicts into

Findinginstances with the rightscan_type,scannername, and severity normalization.

The BaseScanner interface lives in

backend/securescan/scanners/base.py.

That said, the v0.9.0 contract treats the registry as fixed — new

scanners should land via PR rather than runtime registration.

Next

- Findings & severity — the normalized shape every scanner outputs.

- Suppression — silencing a noisy scanner / rule.

- Compliance — mapping rule IDs to frameworks.

Findings & severity

Every scanner output is normalized to the same finding shape. That

shape is what the API returns, what securescan diff compares, what

SARIF / JSON / CSV / JUnit exporters serialize, and what the dashboard

renders.

The Finding shape

{

"id": "f-2c1a93cb",

"scan_id": "0f1a93cb-44c2-4c8e-9f92-0a7c5a2e1b51",

"scanner": "semgrep",

"scan_type": "code",

"severity": "high",

"title": "Use of eval()",

"description": "Detected use of eval(); evaluating arbitrary input is dangerous.",

"file_path": "backend/securescan/cli.py",

"line": 142,

"column": 8,

"rule_id": "python.lang.security.audit.eval-detected",

"cwe": "CWE-95",

"fingerprint": "9d2f3a1b8c4e5f6a7b8c9d0e1f2a3b4c5d6e7f8a9b0c1d2e3f4a5b6c7d8e9f0a1",

"compliance_tags": ["OWASP-A03", "PCI-DSS-6.5.1"],

"metadata": {

"suppressed_by": null,

"original_severity": null

}

}

The Pydantic model is Finding in

backend/securescan/models.py.

The dashboard's findings endpoint (GET /api/v1/scans/{id}/findings)

returns FindingWithState — every field above plus an optional

state: FindingState | null for the triage verdict

(see Triage workflow).

Severity

Five levels. The ramp is a single tonal ramp around the warm hue, not stoplight RGB:

| Level | Meaning | Default --fail-on-severity behavior |

|---|---|---|

critical | Drop-everything. Active exploitation likely. | Fail |

high | Real risk. Fix before release. | Fail |

medium | Should fix; not blocking. | Fail when --fail-on-severity=medium |

low | Nice to fix. | |

info | Informational; not actionable on its own. |

Severity is per-scanner but normalized here. Different scanners report on different scales (Trivy uses CVSS, Bandit uses LOW/MEDIUM/HIGH, Semgrep uses INFO/WARNING/ERROR), and they all map into this five-level common denominator.

You can override severity per rule via .securescan.yml:

severity_overrides:

python.lang.security.audit.dangerous-system-call: medium

python.lang.security.audit.eval-detected: low

When an override applies, the original severity is preserved on

metadata.original_severity, and the dashboard renders

severity (was: original) in the row so the audit trail stays

visible.

Risk score

The scan summary (GET /api/v1/scans/{id}/summary) carries a

risk_score field, a single number aimed at trend lines and

quarterly reviews. It is roughly:

Weighted by severity rank (critical/high count for far more than low/info) and scanner confidence.

The exact formula lives in

backend/securescan/scoring.py

and is intentionally not documented in detail here — the score is

useful as a trend indicator, not as a precise metric to negotiate.

For decisions, look at the severity counts directly.

For the dashboard Overview page's trend chart and the scan-detail

StatLine, severity counts are the primary metric. risk_score is a

single rolled-up number for headline use.

Fingerprints — cross-scan identity

Every finding gets a deterministic fingerprint:

sha256(

scanner | rule_id | file_path | normalized_line_context | cwe

)

Construction is in

backend/securescan/fingerprint.py.

The normalized_line_context is the matched line with whitespace

collapsed and trivial reformat normalized — so renaming a variable

shifts the fingerprint, but reformatting the file does not.

This identity is what keeps:

- Triage verdicts sticky across rescans (

finding_statesis keyed onfingerprint, not(scan_id, finding_id)). - PR comment threads stable across re-runs (the inline-review poster looks up existing comments by fingerprint and PATCHes them rather than posting duplicates).

- SARIF re-uploads clean —

partialFingerprints.primaryLocationLineHashis set from the same value, so GitHub's Security tab dedupes. securescan comparesane —NEW/STILL_PRESENT/DISAPPEAREDis computed by fingerprint set difference.

Practically: if you triage a finding as false_positive once, it

stays a false positive across every later scan of the same target,

even after DELETE /scans/{id}. See Triage.

Deduplication

Multiple scanners can find the same underlying issue from different

angles — Bandit and Semgrep both flag eval(). The orchestrator runs

dedup_key from

backend/securescan/dedup.py

across the union of scanner outputs and keeps the higher-confidence

finding (the one whose scanner is more authoritative for that rule

class).

The dropped findings still show up in the scan's lifecycle log — they are filtered before persistence, so the database only stores the canonical finding for each underlying issue.

Severity badges

The dashboard renders severity as a colored dot prefix + the level text:

● critical coral background

● high burnt orange

● medium saffron

● low dusty teal (NOT bright blue)

● info ash

The exact OKLCH values live in

frontend/src/app/globals.css.

There is no neon red / yellow / green — see DESIGN.md for

the rationale.

Compliance tags

Each finding can carry one or more compliance_tags — strings like

OWASP-A03, PCI-DSS-6.5.1, SOC2-CC7.1. The mapping engine

(backend/securescan/compliance.py) matches by CWE, rule_id, or

keyword and the dashboard renders chips per finding plus a coverage

summary on the Overview page. See Compliance for

which frameworks are mapped and how.

Suppression metadata

When a finding is suppressed (inline comment, config rule, or

baseline), metadata.suppressed_by is set to one of:

"inline"—# securescan: ignore RULE-IDon the line."config"—.securescan.ymlignored_rules."baseline"— present in the saved baseline JSON.

By default, suppressed findings are hidden from CI output (PR

comments, SARIF) but rendered on a TTY (and in the dashboard via the

"Show suppressed" toggle) with a [SUPPRESSED:<reason>] prefix so

you can audit the breakdown without re-running. Force visibility

everywhere with --show-suppressed.

See Suppression.

Output formats

| Format | Where it lives | Use |

|---|---|---|

text | CLI default for TTY runs. | Human-readable terminal output. |

json | --output json — finding records as a JSON array. | Snapshot mode, downstream tools, baselines. |

sarif | --output sarif — SARIF v2.1.0 with partialFingerprints. | GitHub Security tab, third-party SARIF readers. |

csv | --output csv — one row per finding. | Spreadsheet import, compliance reports. |

junit | --output junit — failures = findings. | CI test-result tabs. |

github-pr-comment | --output github-pr-comment — markdown with <!-- securescan:diff --> upsert marker. | The default for securescan diff. |

github-review | --output github-review — payload for GitHub's Reviews API. | Inline-review mode of the GitHub Action. |

All formats produce byte-identical output for the same inputs — see Architecture: determinism contract.

Next

- Suppression — three ways to silence a finding.

- Triage — verdicts that survive across scans.

- Compliance — framework mapping.

Suppression

Three independent suppression mechanisms, with a fixed precedence:

inline > config > baseline

The most-local mechanism wins; an inline comment defeats a config

rule, a config rule defeats a baseline entry. Each mechanism stamps

metadata.suppressed_by on the finding so the audit trail records

which path silenced it.

1. Inline ignore comments

Place a comment on the finding's line, or the line above:

data = eval(payload) # securescan: ignore python.lang.security.audit.eval-detected

# securescan: ignore-next-line python.lang.security.audit.eval-detected

result = eval(other_payload)

Recognized comment styles: #, //, --. Multiple rule IDs are

comma-separated. * is a wildcard.

// securescan: ignore-next-line javascript.lang.security.detect-eval, javascript.lang.security.detect-non-literal-regex

const fn = new Function(userCode);

-- securescan: ignore * (wildcard — silences EVERY rule on this line)

SELECT * FROM users WHERE id = '${user_id}';

When this mechanism applies, metadata.suppressed_by = "inline".

The Metbcy/securescan@v1 GitHub Action with pr-mode: inline, inline-suggestions: true posts each finding with a one-click

\``suggestionblock adding the# securescan: ignore RULE-ID`

comment above the line. Reviewers can apply it with the GitHub UI's

"Commit suggestion" button. See GitHub Action.

2. Config-driven rules (.securescan.yml)

Repo-wide rule list. Best for rules that fire too often to silence inline.

# .securescan.yml at the repo root

ignored_rules:

- python.lang.security.audit.dynamic-django-attribute

- B106 # bandit: hardcoded password (we use a vault)

- python.django.security.audit.csrf-exempt-without-csrf-token

The config file is auto-detected: securescan walks up from the

target directory until it finds .securescan.yml or hits a .git/

boundary. To validate:

$ securescan config validate

.securescan.yml: OK

scan_types: code, dependency

ignored_rules: 3

severity_overrides: 2

Validation catches typos in severity values, missing rule-pack

paths, and ignored_rules ↔ severity_overrides collisions before

they bite at scan time.

When this mechanism applies, metadata.suppressed_by = "config".

Severity overrides (related)

Not strictly suppression, but adjacent: severity_overrides rewrites

a rule's severity post-scan, preserving the original on

metadata.original_severity:

severity_overrides:

python.lang.security.audit.dangerous-system-call: medium

some.noisy.rule: low

The dashboard renders severity (was: high) so reviewers know the

override was applied. See Findings & severity.

3. Baseline (legacy findings)

Adopt-on-an-existing-codebase mechanism. Snapshot the current set of findings; subsequent scans suppress everything in the snapshot.

# Once, when adopting SecureScan:

securescan baseline # writes .securescan/baseline.json (deterministic, git-friendly)

git add .securescan/baseline.json

git commit -m "chore: SecureScan baseline"

# Every PR / CI run:

securescan diff . --base-ref main --head-ref HEAD \

--baseline .securescan/baseline.json

The baseline file is canonicalized and byte-deterministic: no timestamps, relative target_path, sorted finding entries. Two identical scans produce two identical baseline files, so it diffs cleanly in code review.

When this mechanism applies, metadata.suppressed_by = "baseline".

Refreshing the baseline

When findings get fixed, refresh:

securescan baseline # overwrites .securescan/baseline.json

# What disappeared since the last baseline?

securescan compare .securescan/baseline.json

The compare subcommand reports DISAPPEARED findings — your

remediation drift. Useful at the end of a sprint to confirm the

work landed.

Precedence in detail

flowchart TD

F[Finding from scanner] --> Q1{Has inline ignore<br>on this line?}

Q1 -->|yes| Inline[suppressed_by: inline]

Q1 -->|no| Q2{Rule listed in<br>ignored_rules?}

Q2 -->|yes| Config[suppressed_by: config]

Q2 -->|no| Q3{Fingerprint in<br>baseline.json?}

Q3 -->|yes| Baseline[suppressed_by: baseline]

Q3 -->|no| Kept[kept — visible in output]

Inline --> Out[output filtering]

Config --> Out

Baseline --> Out

Kept --> Out

The "winner" is the most-local applicable mechanism. If a finding is

both inline-ignored AND in the baseline, suppressed_by = "inline".

What gets hidden

By default:

| Surface | Suppressed shown? | How to override |

|---|---|---|

securescan diff PR comment | No | --show-suppressed |

SARIF (--output sarif) | No | --show-suppressed |

CSV (--output csv) | No | --show-suppressed |

securescan diff on TTY | Yes (with prefix) | --no-suppress to disable suppression entirely |

| Dashboard findings table | No | Toggle: "Show suppressed findings" (per-page) |

--fail-on-severity counting | No | Suppressed findings never gate the build by design |

--fail-on-severity is intentionally only counting kept findings.

A baseline-suppressed critical does not turn a clean PR red — that is

the whole point of baselining.

Audit: what was suppressed?

When you want to see the breakdown:

$ securescan diff . --base-ref main --head-ref HEAD --show-suppressed

[NEW]

[HIGH] semgrep backend/api.py:42 Use of eval()

[MEDIUM] secrets config.yml:5 AWS access key

[SUPPRESSED:inline]

[HIGH] semgrep tests/fixtures/eval.py:3 intentional eval in fixture

[SUPPRESSED:config]

[LOW] semgrep migrations/0001.py:12 ignored_rules: noisy-rule

[SUPPRESSED:baseline]

[HIGH] bandit backend/legacy.py:88 (legacy finding)

Summary

NEW: 2

Suppressed: 3 (inline=1, config=1, baseline=1)

fail-on-severity: none

The PR comment summary table also includes a

"Suppressed: N (inline=I, config=C, baseline=B)" row when

--show-suppressed is set so reviewers can audit without re-running.

Disabling suppression entirely (kill switch)

Sometimes you want every finding visible — review pass, audit, etc:

securescan diff . --base-ref main --head-ref HEAD --no-suppress

--no-suppress ignores inline comments, config rules, AND the

baseline. Useful but rarely the right CI default.

Picking the right mechanism

- One bad fixture line → inline comment.

- A scanner rule that fires hundreds of times →

ignored_rules. - Adopting SecureScan on an old codebase →

securescan baseline. - A rule that fires "right" on production code but is wrong here →

severity_overridesto lower its weight first;ignored_rulesonly if it is genuinely wrong. - An entire scan family that does not apply to your repo → just don't pass that

--type. Suppression is for findings, not scanners.

Next

- Triage workflow — durable verdicts beyond suppression.

- Findings & severity — the underlying model.

- GitHub Action —

inline-suggestions: truefor one-click ignores.

Triage workflow

Triage is how you record what you decided about a finding, durably, across rescans of the same target. Introduced in v0.7.0, it sits one layer above Suppression: suppression silences a finding, triage records the human verdict on a finding — including whether it was actually fixed, accepted as risk, or won't be fixed.

Triage statuses

new (default — no verdict recorded)

triaged (acknowledged; under investigation)

false_positive (scanner is wrong)

accepted_risk (real, but accepted by the team)

fixed (the underlying code was changed; verify on rescan)

wont_fix (real, but out of scope to fix)

The dashboard's findings table renders a colored status pill in the

"Status" column. The default filter hides

{false_positive, accepted_risk, wont_fix} — those are decisions, the

table no longer needs to draw attention to them.

Scope: per-fingerprint, not per-scan

Triage state is keyed on the cross-scan fingerprint — not the scan id. Three consequences:

- A verdict survives every later scan of the same target. Mark a

finding

false_positiveonce; the next scan, and the one after, keep that verdict. - A verdict survives

DELETE /scans/{id}. The triage row stays; it reactivates when a matching fingerprint appears again. - A "fixed" finding shows up loud on regression. If it

reappears in a later scan, the row is rendered with the

fixedpill + strikethrough — the team can see at a glance that something came back.

This is by design. Triage state and per-finding comments are an audit trail, not scan metadata.

Setting a verdict

FP="9d2f3a1b8c4e5f6a..."

curl -X PATCH \

"http://127.0.0.1:8000/api/v1/findings/$FP/state" \

-H "X-API-Key: $K" \

-H 'Content-Type: application/json' \

-d '{

"status": "false_positive",

"note": "intentional eval in test fixture",

"updated_by": "alice"

}'

Response:

{

"fingerprint": "9d2f3a1b8c4e5f6a...",

"status": "false_positive",

"note": "intentional eval in test fixture",

"updated_at": "2026-04-29T20:14:22.000000",

"updated_by": "alice"

}

PATCH semantics here are replace, not merge. Omitting note

clears the prior note. Use the comments thread for incremental

remarks; reserve note for a one-line "why" on the verdict.

The endpoint requires the write scope — see

Scopes. Source:

backend/securescan/api/triage.py.

Comments

Each finding has a per-fingerprint comments thread. Like the verdict, comments are durable across scans.

# List comments

curl "http://127.0.0.1:8000/api/v1/findings/$FP/comments" \

-H "X-API-Key: $K"

# Add a comment

curl -X POST "http://127.0.0.1:8000/api/v1/findings/$FP/comments" \

-H "X-API-Key: $K" \

-H 'Content-Type: application/json' \

-d '{"text":"Reviewed with security team — green-lit.","author":"bob"}'

# Delete a comment by id

curl -X DELETE \

"http://127.0.0.1:8000/api/v1/findings/$FP/comments/c-12ab" \

-H "X-API-Key: $K"

Comments require the write scope (add and delete); listing

requires read.

The dashboard renders the comments thread in the expanded row panel, lazy-loaded the first time you open it. Add / delete are inline.

In the dashboard

The Scan Detail page's findings table:

- New Status column shows the pill (color-coded;

fixedadds strikethrough + accent-green). - Sticky filter bar has a status filter chip strip in addition to severity chips. Triage and suppression filters are independent and AND-combined.

- Default-hide set is

{false_positive, accepted_risk, wont_fix}— toggle them via the filter to see them. - Expand a row → Triage panel (status dropdown + note textarea)

- Comments panel (lazy loaded; add / delete inline).

See Dashboard tour.

Endpoints

| Method | Path | Scope | Notes |

|---|---|---|---|

PATCH | /api/v1/findings/{fingerprint}/state | write | Set / replace verdict + note. Server stamps updated_at. |

GET | /api/v1/findings/{fingerprint}/comments | read | Returns [] when no comments. ASC by created_at. |

POST | /api/v1/findings/{fingerprint}/comments | write | 201 with the persisted row. |

DELETE | /api/v1/findings/{fingerprint}/comments/{comment_id} | write | 204 on success, 404 if id is unknown. |

The fingerprint is not validated against any current scan — it's intentional that you can set state on a fingerprint that does not correspond to any current finding. The state row reactivates when the finding reappears.

Workflow with rescans

sequenceDiagram

autonumber

participant Dev as Developer

participant API as SecureScan

participant DB as DB

Dev->>API: POST /api/v1/scans (target=./repo)

API-->>Dev: scan_1 (5 findings)

Dev->>API: PATCH /findings/FP-1/state {status:false_positive}

API->>DB: INSERT finding_states (FP-1, false_positive)

Dev->>API: DELETE /api/v1/scans/scan_1

Note over DB: finding_states (FP-1) survives

Dev->>API: POST /api/v1/scans (target=./repo)

API-->>Dev: scan_2 (same 5 findings)

Dev->>API: GET /findings (filtered)

API->>DB: JOIN findings(scan_2) ON finding_states by FP

API-->>Dev: scan_2 findings — FP-1 hidden by default filter

A fix workflow:

sequenceDiagram

autonumber

participant Dev as Developer

participant API as SecureScan

Dev->>API: PATCH /findings/FP-2/state {status:fixed,note:"fixed in PR 42"}

Note over Dev: ... time passes, rescan ...

Dev->>API: POST /api/v1/scans

API-->>Dev: scan_3 — FP-2 not present (truly fixed)

Note over Dev: regression check passes

Note over Dev: ... time passes, refactor ...

Dev->>API: POST /api/v1/scans

API-->>Dev: scan_4 — FP-2 present again

Note over Dev: dashboard shows FP-2 with strikethrough fixed pill — regression signal

CLI access

There is no first-class CLI for triage in v0.9.0; use curl or the

dashboard. A securescan triage subcommand is on the roadmap.

Triage vs. suppression: when to use which

| Question | Mechanism |

|---|---|

| "Stop printing this finding in PR comments forever" | Suppression (ignored_rules) |

| "I've reviewed this; record my decision" | Triage (PATCH /findings/{fp}/state) |

| "I want this finding gone but I want to know if it comes back" | Triage fixed (NOT default-hidden — regression loud) |

| "Adopt SecureScan on an old codebase" | Suppression (securescan baseline) |

| "Track who decided what, when" | Triage (updated_by + comments) |

In short: suppression silences output, triage records decisions. You can use both — a finding can be triaged AND suppressed.

Next

- Findings & severity — the fingerprint that keys triage state.

- Suppression — for output filtering, not decisions.

- API endpoints — full request/response shape.

Compliance

SecureScan ships a compliance-mapping engine that tags each finding

with framework references — OWASP Top 10, CIS, PCI-DSS, SOC 2 — by

matching the finding's CWE, rule_id, or scanner output keywords

against per-framework data files.

This is a coverage indicator, not a certification. SecureScan does not certify your org against any framework; it surfaces which findings would map to which controls so you can demonstrate coverage and prioritize remediation.

Mapped frameworks

| Framework | Where rules come from |

|---|---|

| OWASP Top 10 | CWE → A01–A10 mapping. Tags look like OWASP-A03, OWASP-A07. |

| CIS Controls | Control category mapping (CIS-3, CIS-5, …) by rule_id keyword. |

| PCI-DSS | Specific requirement IDs: PCI-DSS-6.5.1 (injection), PCI-DSS-2.2 (config), etc. |

| SOC 2 | Trust service criteria: SOC2-CC6.1 (logical access), SOC2-CC7.1 (change management), etc. |

The mapper lives in

backend/securescan/compliance.py

and uses simple data files under

backend/securescan/data/.

Adding a framework means dropping a JSON file with the rule mappings

— no code change.

How tags are computed

For each finding the mapper checks:

- CWE (

cwefield, e.g.CWE-89). Direct lookup against the framework's CWE → control table. - rule_id. Keyword match against per-framework lists

(

B106→ SOC2-CC6.1;eval-detected→ OWASP-A03; etc.). - Scanner output keywords. Falls back to substring match against

title+descriptionfor rules without a CWE or specific mapping.

Results are deduplicated and sorted alphabetically. A finding can carry multiple tags from multiple frameworks:

{

"rule_id": "python.lang.security.audit.eval-detected",

"cwe": "CWE-95",

"compliance_tags": [

"OWASP-A03",

"PCI-DSS-6.5.1",

"SOC2-CC7.1"

]

}

In the dashboard

Per-finding chips

Each finding row in the table renders a Compliance column with chip icons for each tag. Hover a chip to see the framework + control name.

Coverage cards

The Overview page renders one tokenized coverage card per framework:

┌─ OWASP Top 10 ─────────────────────────┐

│ 6 / 10 controls observed │

│ ●●●●●●○○○○ │

│ Last seen: A03, A07 (this scan) │

└────────────────────────────────────────┘

The cards link to a per-framework drill-down with the matching findings grouped by control.

API

List findings filtered by compliance tag

curl "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/findings?compliance=OWASP-A03" \

-H "X-API-Key: $K"

Returns only findings whose compliance_tags includes

OWASP-A03. Multiple filters can be combined with the severity /

scan_type query params (AND semantics).

Coverage summary

curl "http://127.0.0.1:8000/api/v1/compliance/coverage?scan_id=$SCAN_ID" \

-H "X-API-Key: $K"

{

"frameworks": {

"OWASP": {

"controls": ["A01", "A02", "A03", "A04", "A05", "A06", "A07", "A08", "A09", "A10"],

"observed": ["A03", "A07"],

"coverage_pct": 20.0

},

"PCI-DSS": {

"controls": ["1.2", "2.2", "6.5.1", ...],

"observed": ["6.5.1"],

"coverage_pct": 12.5

}

}

}

Use in PR review

Compliance tags are included in the SARIF output's

properties.tags per result:

{

"ruleId": "python.lang.security.audit.eval-detected",

"level": "warning",

"properties": {

"tags": ["OWASP-A03", "PCI-DSS-6.5.1", "SOC2-CC7.1"],

"suppressed_by": null

}

}

When uploaded to GitHub's Security tab, those become searchable tags on the alert.

In the PR comment (github-pr-comment output), tags are rendered

inline next to each finding so reviewers see compliance impact at a

glance:

[HIGH] Use of eval() · semgrep · OWASP-A03 PCI-DSS-6.5.1 SOC2-CC7.1

backend/api.py:42

What this is not

The compliance engine is a coverage indicator. It tags findings that touch a control. It does not:

- Audit your org against the framework.

- Generate a control matrix on its own.

- Prove the absence of issues for unobserved controls.

- Replace the work of a qualified auditor.

What it gives you is a useful prioritization signal — a critical finding that touches OWASP-A03 + PCI-DSS-6.5.1 + SOC2-CC7.1 should be above one that touches none.

Customizing mappings

To add or override a mapping, edit the per-framework JSON file under

backend/securescan/data/. Each entry is a (rule_id | cwe | keyword)

→ [control_id, ...] mapping. Restart the backend after edits.

A custom-framework PR is welcome; please include rationale and at least one known-good test case.

Next

- Findings & severity — finding shape with

compliance_tags. - API endpoints — coverage / per-tag filtering endpoints.

Dashboard tour

The dashboard is the secondary surface — the CLI is the source of

truth. You go to the dashboard when you want to browse historical

scans, interactively triage backlog, or show somebody else

what's going on. It is a Next.js app under

frontend/;

all pages talk only to the backend's REST API.

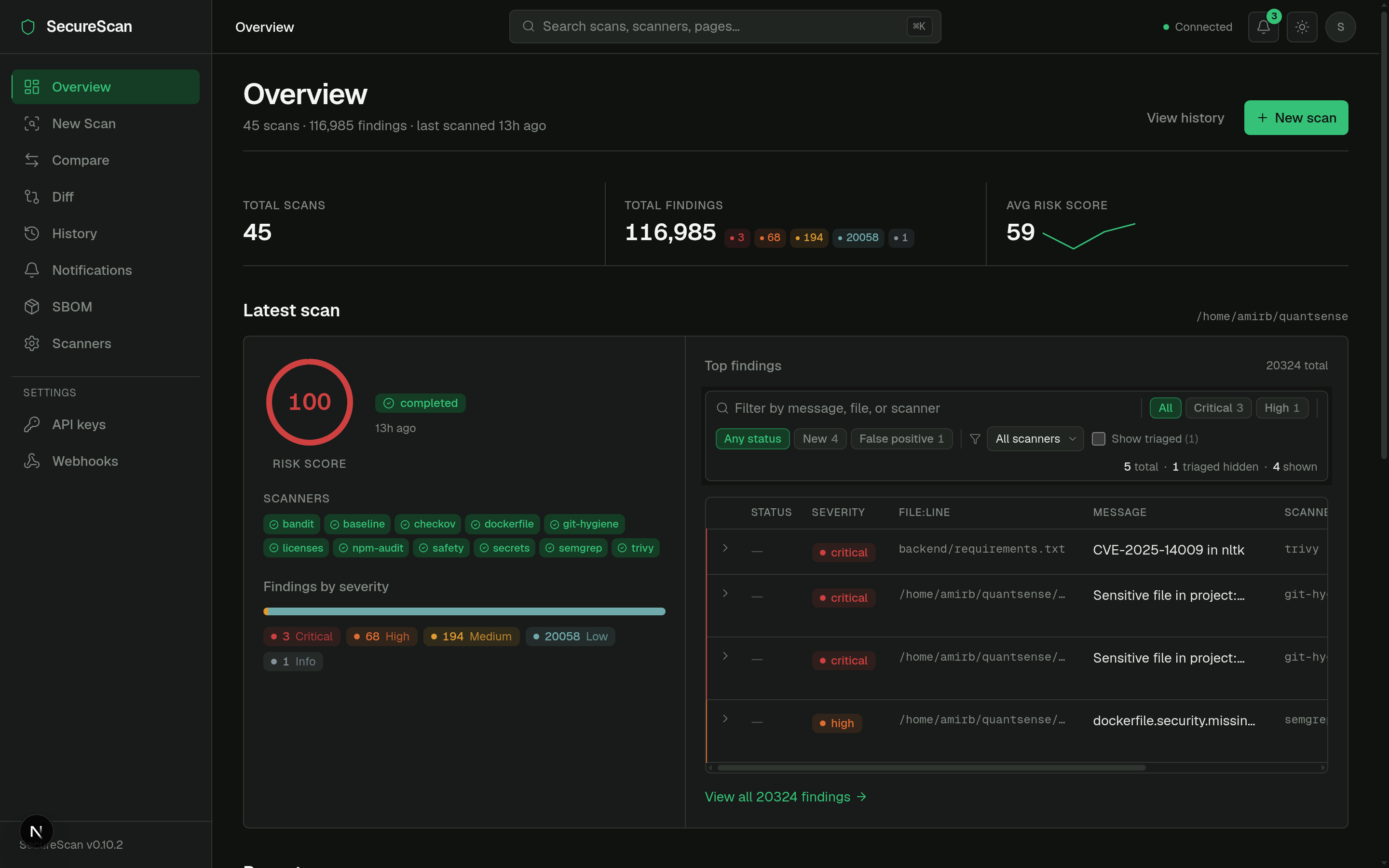

This tour walks the v0.6.0 redesign. The screenshots-in-words below match what you see on a fresh local install.

App shell

Persistent layout, applied to every page (defined in

frontend/src/app/layout.tsx):

- Sidebar (220px, left). Page nav grouped into Scans, Reports, Settings. Collapses to icon strip below 1024px.

- Topbar (56px, sticky). Breadcrumb-style page label (left),

command palette trigger (

⌘K, center), and on the right: notifications bell, API health indicator, theme toggle. - Main content. Page-specific.

The shell is intentionally calm — refined neutrals, single accent color (moss green), no neon. See DESIGN.md for the rules.

Overview (/)

The home page. Layout:

- PageHeader — title "Overview", scan count + scanner count metadata, "New scan" primary action.

- StatLine — running totals: total scans, total findings, critical / high counts, last scan timestamp.

- Latest scan two-column section — the most recent scan's status, target, scanner-chip strip, and severity counts.

- Recent scans compact table — last ~10 rows.

- Compliance coverage cards — one per framework. See Compliance.

Source: frontend/src/app/page.tsx.

New scan (/scan)

Two-column wizard with a sticky preview panel showing what is about to run.

LEFT (form) RIGHT (sticky preview)

───────────────────────────────── ──────────────────────────

Target path [browse...] Will run:

☑ semgrep

Scan types ☑ bandit

☑ Code (4 scanners) ☑ secrets

☐ Dependency (3 scanners) ☑ git-hygiene

☐ IaC (2 scanners)

☐ Baseline (1 scanner) Skipped (1)

☐ DAST (URL required) checkov: pip install checkov

☐ Network (host required)

Quick presets Severity threshold

[code-only] [dep-only] [full] Fail at: high

Recently scanned

/home/me/proj-a

/home/me/proj-b [ Start scan ]

The page reads GET /api/dashboard/status on mount and disables

categories whose scanners are all unavailable, with inline install

hints. Default selection adapts to availability — a host with no

DAST tools won't pre-tick the DAST checkbox.

Source: frontend/src/app/scan/page.tsx.

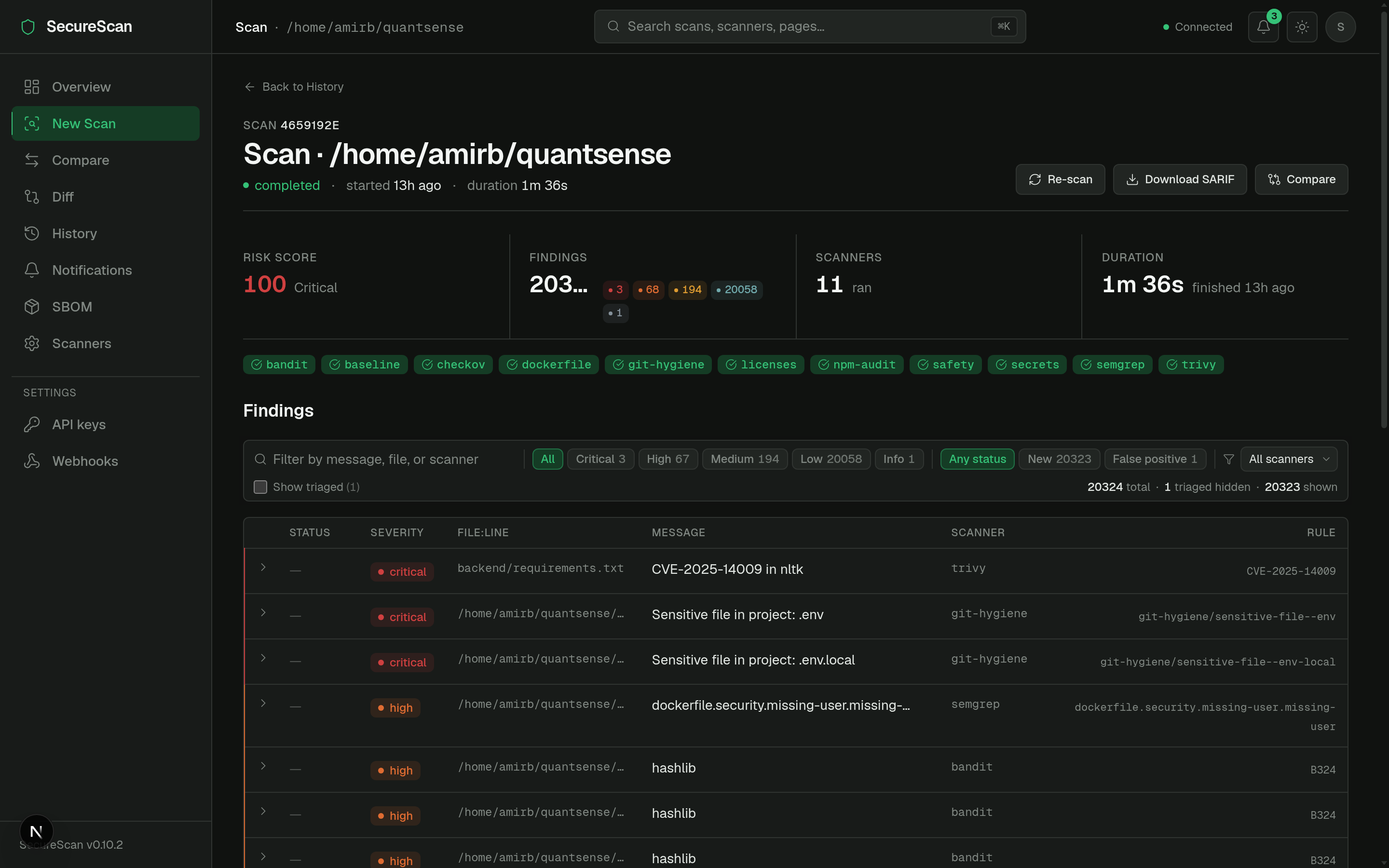

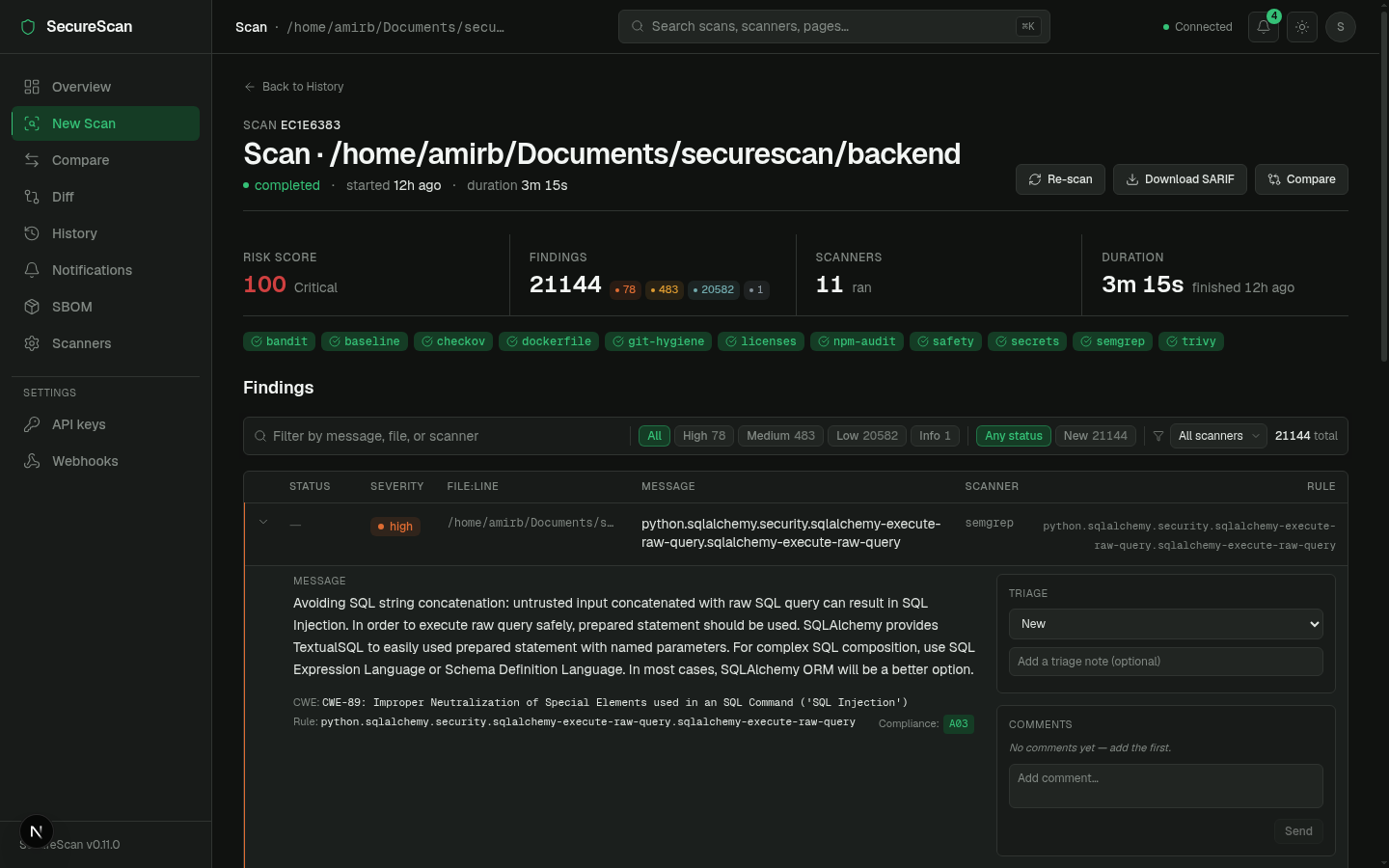

Scan detail (/scan/[id])

The page everybody spends the most time on.

PageHeader

/home/me/proj-a · scan_id 0f1a93cb · [...] [Cancel] [Re-run] [Delete]

StatLine

Risk score 34.2 · ●3 critical · ●5 high · ●2 medium · 12 scanners · 1m 22s

ScanProgressPanel (only while running/pending — v0.7.0 SSE)

●semgrep ✓ complete 124ms 7 findings

●bandit ✓ complete 62ms 3 findings

●trivy running...

●safety queued

Scanner chip strip

Ran: semgrep · bandit · trivy · safety · secrets Skipped (1): zap

Sticky filter bar

Severity: [● critical 3] [● high 5] [● medium 2] [● low 0] [● info 0]

Status: [new] [triaged] [false_positive] [accepted_risk] [fixed] [wont_fix]

[search...] [▼ Show suppressed]

Findings table (compact, sortable, severity-tinted left edge)

┃ ● critical Use of eval() backend/api.py:42 semgrep ⌃

┃ ● critical SQL injection backend/db.py:12 bandit ⌃

┃ ● high Missing X-Frame-Options (https://...) dast ⌃

...

Expand a row to reveal:

- Matched line (mono, 5-line context).

- AI explanation + remediation hint (when

--aiwas on; off by default in CI). - Triage panel — status dropdown + note textarea.

- Comments panel — thread, lazy-loaded.

The live progress panel above the findings table only shows while a

scan is pending or running — it animates from queued → running →

complete per scanner over Server-Sent Events:

See Real-time scan progress for the SSE flow and Triage workflow for the verdict mechanics.

Source: frontend/src/app/scan/[id]/page.tsx.

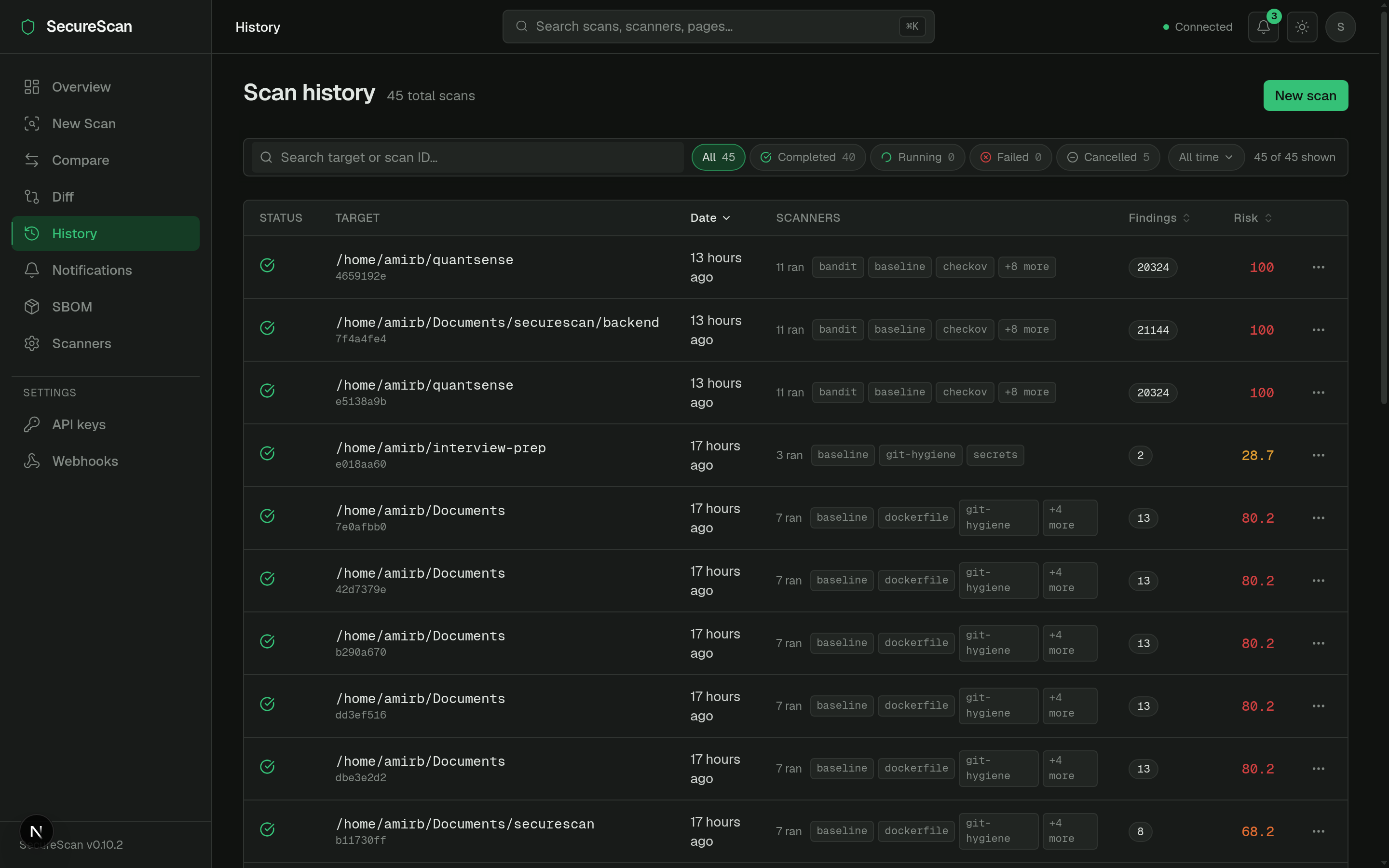

History (/history)

A real data table, not a card grid (the v0.6.0 redesign:

frontend/src/app/history/page.tsx).

- Sortable columns: target, started, duration, status, finding count.

- Status icons inline (●completed / ●running / ●cancelled / ●failed).

- Mono target paths.

- Scanner chip strip per row with overflow indicator (

+3 more). - Kebab action menu per row: re-run, delete, copy link.

- URL-persisted sort + page-size — the URL is the truth, so sharing a filtered view is a copy-paste.

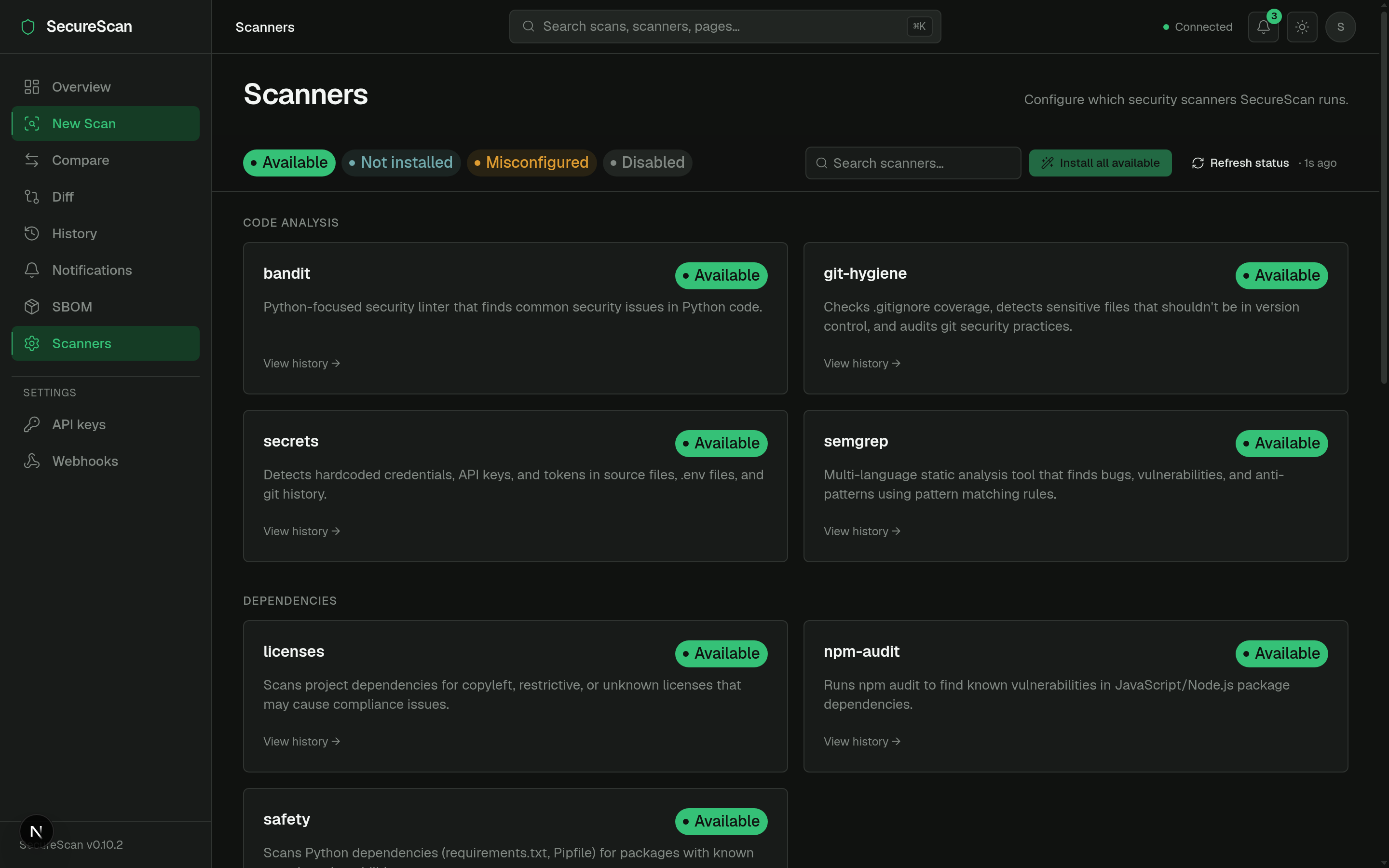

Scanners (/scanners)

Categorized scanner directory.

Code analysis ●semgrep ●bandit ●secrets ●git-hygiene

Dependencies ●trivy ●safety ●npm-audit ●licenses

Containers / IaC ●checkov ●dockerfile

Network ●nmap

Web (DAST) ●builtin_dast ●zap

- Sticky status legend + search at the top.

- Each card: name, category, version (if installed), install hint or

install button (for scanners that can be

pip installed). - "Install all available" bulk action.

Diff (/diff)

PR-style scan-vs-scan comparison (FEAT1 from v0.6.0).

Base [scan picker ▾] ↔ Head [scan picker ▾]

Summary chips

▲ 3 new ▼ 2 resolved = 14 unchanged Risk Δ +12.4

Tabs: [ New (3) ] [ Resolved (2) ] [ Unchanged (14) ]

(table per tab, same columns as scan-detail)

See Diff & compare.

SBOM (/sbom)

Software Bill of Materials viewer (CycloneDX or SPDX).

- Segmented format toggle: CycloneDX / SPDX.

- Scan picker card.

- Component table with ecosystem stats (npm vs PyPI vs Crates …).

See SBOM.

Compare (/compare)

Same shape as /diff but framed for "current scan vs saved baseline"

rather than "scan A vs scan B".

Notifications (/notifications)

Full feed of in-app notifications. See Notifications.

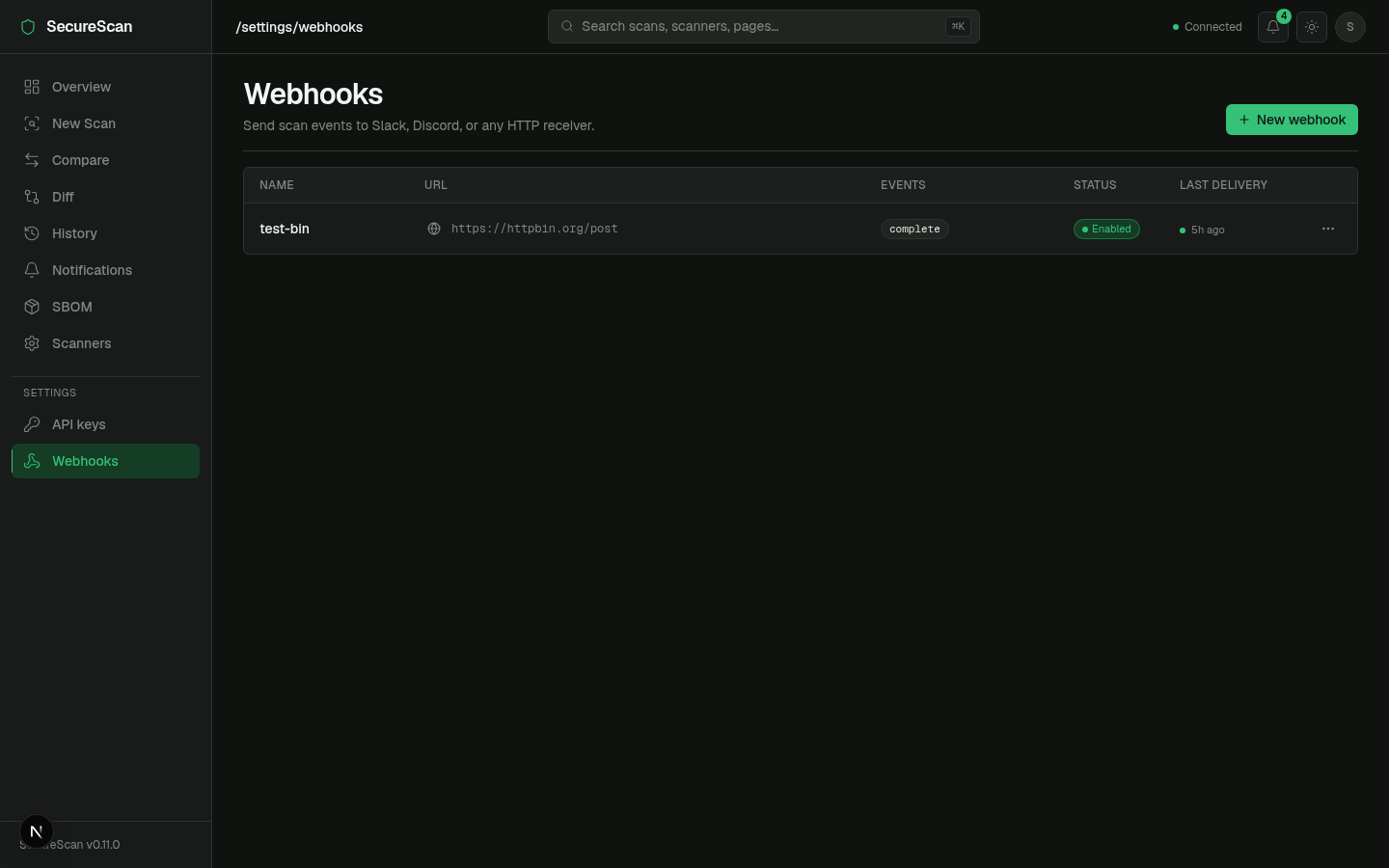

Settings

/settings/keys— list, create, revoke API keys. See API keys./settings/webhooks— list, create, edit, test webhooks. See Webhooks.

Topbar widgets

Notifications bell

Live unread badge, polled every 30s. Click → 360px popover with the 10 most recent (severity dot + title + relative timestamp). See Notifications.

API health indicator

Pings GET /ready every ~10s. Color codes:

- ● green — ready (200).

- ● amber — degraded (200 with one of the checks failing — rare).

- ● red — unreachable / 503.

Hover for the underlying check breakdown.

Theme toggle

next-themes integration. Dark default; persists to localStorage and

to a cookie so SSR doesn't flash.

Command palette (⌘K)

Mounted at app root. Searches: pages, recent scans, scanners. Keyboard driven. The primary nav affordance for power users.

Auth & the dashboard

The frontend client (frontend/src/lib/api.ts) injects

X-API-Key: <NEXT_PUBLIC_SECURESCAN_API_KEY> on every request when

the env var is set at build time. For DB-backed keys (v0.8.0+), the

flow is the same — set the key value as NEXT_PUBLIC_SECURESCAN_API_KEY.

For SSE streams (/scans/{id}/events), the dashboard exchanges that

key for a short-lived event token first — see

SSE event tokens.

NEXT_PUBLIC_* env vars are baked into the build and shipped to the

browser. Do not put a high-trust admin key there; use a read-scope

key. For dashboards exposed beyond your laptop, terminate the dashboard

behind your own auth (SSO, mTLS) and treat its key as a service

identity, not a user identity.

Next

- Real-time scan progress — the SSE flow under the hood.

- Notifications — the bell icon's data path.

- Webhooks — outbound delivery of the same events.

- API keys —

/settings/keysin detail.

Real-time scan progress

While a scan is running (or pending), the dashboard's scan-detail

page does not poll. It opens a Server-Sent Events stream and renders

each scanner's lifecycle as it happens — queued → running → complete / failed / skipped.

Introduced in v0.7.0; the SSE-with-auth path was finalized in v0.9.0 (SSE event tokens).

Endpoint

GET /api/v1/scans/{scan_id}/events

Accept: text/event-stream

# Authenticated deployments:

GET /api/v1/scans/{scan_id}/events?event_token=<token>

Browsers cannot send X-API-Key (or any custom header) on an

EventSource. For authenticated deployments, the FE first calls

POST /api/v1/scans/{id}/event-token to mint a short-lived token,

then opens EventSource with ?event_token=.... See

SSE event tokens.

Events emitted

| Event | When | Payload (selected fields) |

|---|---|---|

scan.start | Orchestrator begins. | {scan_types} |

scanner.start | A specific scanner is about to run. | {name} |

scanner.complete | A scanner returned successfully. | {name, duration_s, findings_count} |

scanner.skipped | A scanner is unavailable. | {name, reason, install_hint} |

scanner.failed | A scanner crashed. | {name, error} (error truncated to 200 chars) |

scan.complete | All scanners returned (or were skipped); status is completed. | {findings_count, risk_score, scanners_run, scanners_skipped} |

scan.failed | Orchestrator-level failure. | {error} |

scan.cancelled | POST /scans/{id}/cancel succeeded. | (no fields) |

scan.complete, scan.failed, and scan.cancelled are terminal

events — the server closes the stream after emitting one. Source:

TERMINAL constant in

backend/securescan/events.py.

On the wire

curl -N "http://127.0.0.1:8000/api/v1/scans/$SCAN_ID/events"

: keepalive

event: scan.start

data: {"scan_types":["code","dependency"]}

event: scanner.start

data: {"name":"semgrep"}

event: scanner.complete

data: {"name":"semgrep","duration_s":4.31,"findings_count":7}

event: scanner.start

data: {"name":"bandit"}

event: scanner.complete

data: {"name":"bandit","duration_s":1.04,"findings_count":2}

event: scan.complete